Why Most AI Implementations Fail (And It's Not the Technology)

This is why your employees are resisting AI initiatives.

Here’s a scenario I see playing out constantly: A 25-person marketing agency buys an enterprise AI content generation platform. The founder is excited—finally, a way to scale content production without hiring more writers. Six months later, only two people have logged in. Zero pieces of content were generated. And the company is paying $4,000 a month for software that’s gathering digital dust.

Meanwhile, nearly 80% of employees at companies that purchase AI tools are quietly bringing their own AI to work—using ChatGPT, Claude, or whatever free tool they can find, often without telling anyone. They’re not avoiding AI. They’re avoiding the tool leadership chose.

What went wrong? The technology worked perfectly. The AI could write, edit, and optimise content exactly as promised. The problem wasn’t the platform. It was human.

This isn’t an isolated story. When RAND Corporation studied AI implementations in 2024, they found that over 80% fail to deliver expected outcomes. That’s twice the failure rate of regular IT projects. By 2025, things got worse—42% of companies abandoned most of their AI initiatives entirely, up from just 17% the year before.

Here’s what makes this painful: most companies are spending their time and money solving the wrong problem.

The Uncomfortable Truth About AI Failure

When Prosci studied over 1,100 companies implementing AI in 2025, they discovered something that should make every business owner pause. Nearly two-thirds of AI implementation challenges had nothing to do with the technology itself. Not buggy algorithms. Not poor data quality. Not insufficient computing power.

The problem was people—how they were trained, whether they trusted the technology, and if anyone actually helped them change how they worked.

Your company might be investing heavily in AI platforms and data infrastructure while completely ignoring the real barrier to adoption. You’re treating this like a technology problem when it’s actually a human behaviour problem.

Think about that marketing agency. They bought the best tool on the market. It sat unused because no one asked the team what they needed, no one trained them properly, and no one addressed the elephant in the room: “Will this AI replace me?”

The same Prosci study found that 38% of employees struggle with basic user proficiency. These aren’t people who refuse to try—they’re people facing a steep learning curve with inadequate support. Twenty-two percent report significant difficulties just learning how to use the tools. Eleven percent can’t figure out how to write effective prompts. Six percent say they received essentially no training at all.

This is not a technology barrier. It’s a skills and confidence barrier.

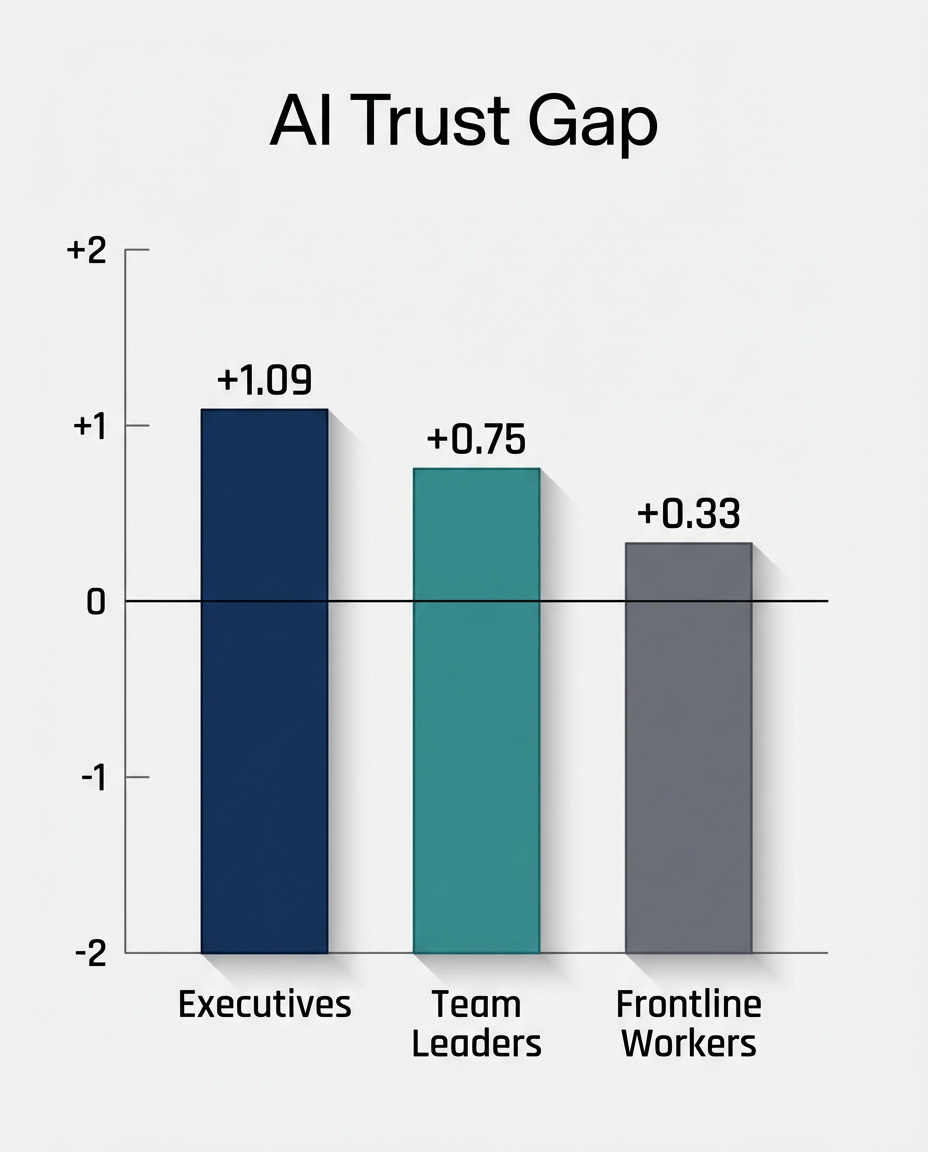

And it gets worse. There’s a massive trust gap between the people making AI decisions and the people expected to use these tools every day.

When researchers measured trust in AI across organisational levels, they found executives scoring +1.09 on a scale from -2 to +2. Frontline workers? They scored +0.33. Leaders are optimistic and confident. The people actually using the tools are sceptical and uncertain.

This creates a dangerous feedback loop. Executives look at usage metrics and think adoption is going well. Meanwhile, frontline employees are quietly struggling, avoiding the tools when possible, and definitely not speaking up about it.

A separate study found that trust in company-provided AI dropped 31% between May and July 2025 alone. Trust in agentic AI—the kind that can take actions on your behalf—dropped 89%.

Here’s what employees are actually worried about: 75% fear that AI will eliminate jobs in general. Sixty-five percent worry specifically about their own job. When three-quarters of your workforce is scared, it doesn’t matter how good your AI platform is.

Why Change Management Is The Missing Piece

This is where most business owners—especially those running small to medium-sized companies—get it wrong. They think about AI as a software purchase. Install the tool, send a company-wide email, maybe do a one-hour demo, and expect people to figure it out.

But AI isn’t just another piece of software. It fundamentally changes how work gets done.

This is where industrial-organisational psychology comes in. I/O psychology is the science of human behaviour at work—and it’s been studying technology adoption since computers first showed up in offices in the 1960s. The field has decades of research on what makes people actually use new tools versus what makes them quietly rebel.

And the single most important framework for AI adoption isn’t about artificial intelligence at all. It’s about change management.

Change management is the process of helping people, teams, and organisations move from how things are now to how things need to be. It focuses on the human side: communication, training, leadership alignment, and helping employees adapt without causing chaos.

AI requires change management more than any technology that’s come before it. Here’s why: previous software typically automated existing processes. You did the same work, just faster or with fewer manual steps. AI often requires you to completely redesign your workflow. It’s not about speeding up what you already do—it’s about doing things differently.

When McKinsey studied companies with successful AI implementations, they found that high-performing organisations had senior leaders who were three times more committed to the change. Not just “supportive”—actively demonstrating ownership, using the tools themselves, and talking about it constantly.

There’s a psychological model called UTAUT—Unified Theory of Acceptance and Use of Technology—that explains what makes people actually adopt new tools. It comes down to four questions:

Will this help me do my job better? (Performance Expectancy)

Will it be hard to learn? (Effort Expectancy)

Do my leaders and peers use and support it? (Social Influence)

Is there training, support, and infrastructure? (Facilitating Conditions)

Research shows that the first question—performance expectancy—is the strongest predictor of whether someone will use a new technology. If people don’t believe it will genuinely help them, nothing else matters.

Now think about that marketing agency again. Did anyone demonstrate to the content team how AI would make their jobs better? Or did they just announce that the company bought a new platform and expect everyone to be excited?

What Actually Causes AI Implementations to Fail

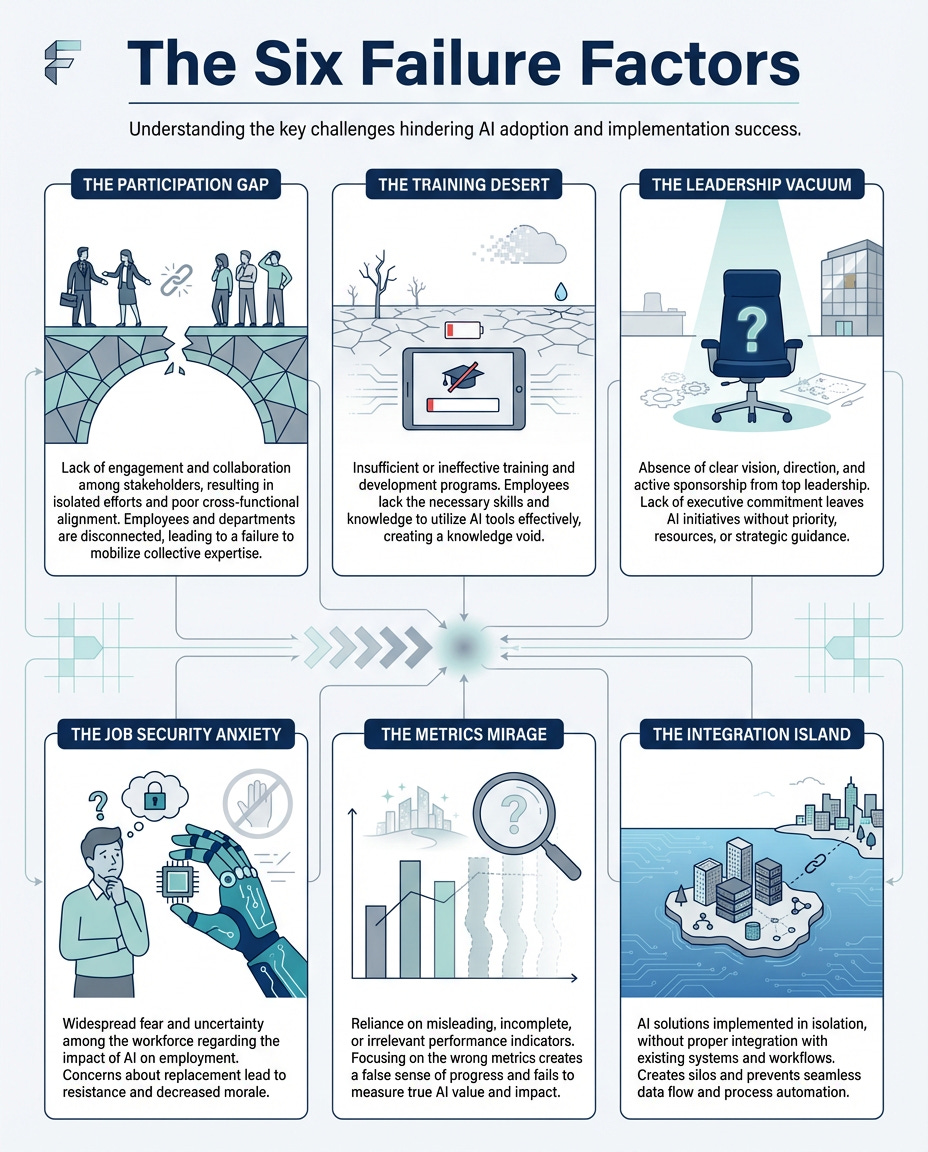

Let me walk you through the six patterns that kill AI adoption. If you’re planning to implement AI—or you’ve already tried and struggled—you’ll probably recognize yourself in these.

The Participation Gap

Leadership decides the company needs AI, picks a tool, and announces it to the team as a done deal. No one asked the people who’d actually use it what they needed or what problems they were trying to solve.

Research on change initiatives consistently shows that when people are involved in decision-making, they’re far more likely to support the change. It’s not just about getting their input—it’s about them having ownership. When change is imposed rather than collaborative, people resist. Not always loudly, but through passive avoidance.

The Training Desert

Most companies do a one-hour demo and then expect people to figure it out. That’s not training—that’s an announcement with screenshots.

What actually works is spaced learning over time, hands-on practice with real work scenarios, and ongoing support when people get stuck. The 70-20-10 model suggests that 70% of learning happens through doing your actual job, 20% through coaching and peer learning, and only 10% through formal training. If you’re only doing that 10% part, you’re missing most of what makes training effective.

The hidden cost of inadequate training is brutal. Someone tries the tool once, struggles, feels stupid, and never opens it again. You’ve just lost that person forever, and they’ll probably warn others away too.

The Leadership Vacuum

If your executives and managers aren’t visibly using the AI tool, why would anyone else?

There’s a psychological principle called modelling—people learn behaviour by watching others, especially those in positions of authority or status. If your leadership team talks about AI but everyone can see they’re not actually using it themselves, the message is clear: this isn’t really important.

The Job Security Anxiety

Nobody wants to say it out loud, but everyone’s thinking it: “Will this replace me?”

Unaddressed fears create passive resistance. People will nod in meetings and then quietly avoid using the tool. They’re not being difficult—they’re protecting themselves from what they perceive as a threat.

This is where transparent communication becomes essential. If you’re implementing AI to augment work, not replace workers, you need to say that explicitly and repeatedly. Show what jobs will look like with AI assistance. Give concrete examples. Make it safe to ask scary questions.

The Metrics Mirage

Companies buy AI tools without defining what success actually looks like. “We’ll figure out how to use it” isn’t a strategy.

This is solution-first thinking—buying technology and then looking for problems to solve. It should work the other way around. What specific outcome are you trying to achieve? How will you measure whether AI helped? What behaviour change are you looking for?

The Integration Island

The AI tool sits separately from how people actually work. It’s not embedded in their daily workflow, so using it requires extra steps. And whenever there’s friction, people default to what they’ve always done.

This is why some AI tools succeed, and others fail, even when the technology is identical. The successful ones are embedded into the systems people already use. The failed ones require people to go somewhere else, remember to use them, and add extra work to their day.

What Our Marketing Agency Could Have Done Differently

Remember that agency paying $4,000 a month for unused software?

Imagine if they’d approached it differently. What if they’d gathered the content team and asked, “What’s the hardest part of your job right now?” Maybe the team would have said, “We spend hours researching client industries before we can write anything intelligent.”

Then leadership could have said, “We’re exploring AI tools that could do that research phase for you—pull together background, identify key trends, give you a starting point. Would that help?”

Now the team is involved. They understand how this solves a real problem they have. They have ownership.

Next, instead of announcing a purchase, they pilot two or three tools with a small group. They give those people real training—not a demo, but hands-on sessions where they practice using AI for actual client projects. They create a Slack channel where people can ask questions and share what’s working.

The CEO starts every Monday meeting by sharing one specific example of how she used AI that week. The creative director does the same. Leadership isn’t just talking about AI—they’re demonstrating it.

And they address the fear directly: “This tool is meant to eliminate the boring research grunt work so you can spend more time on the creative strategy that only humans can do. We’re not reducing headcount. We’re trying to make your jobs less tedious.”

They define success clearly: “In 90 days, we want to cut research time by 50% and increase the volume of client proposals we can produce. We’ll check in monthly to see if this is actually happening.”

The outcome would have been completely different. Not because the technology was different, but because the human side was managed properly.

The Real AI Revolution

Here’s what most people get wrong about the AI revolution. They think it’s about the technology getting smarter, faster, and more capable. That’s happening, sure. But the real revolution will be won by companies that understand that humans are the constraint.

The businesses that succeed with AI won’t be the ones with the most advanced algorithms or the biggest budgets. They’ll be the ones who take the time to help their people actually change how they work.

This is an opportunity for small and medium-sized businesses in particular. You’re more agile than large corporations. You have closer relationships between leadership and frontline employees. You can pivot quickly. You have less bureaucracy to navigate.

But you need to stop treating AI like a purchase and start treating it like a transformation.

That means involving your team in decisions. Investing real time and resources in training. Having leaders model the behaviour you want to see. Addressing job security fears transparently. Defining clear success metrics before you buy anything. And embedding AI into existing workflows rather than creating separate tools that people have to remember to use.

The 80% failure rate isn’t inevitable. It’s a symptom of approaching AI adoption backwards.

The technology is the easy part. Understanding humans—their fears, their motivations, their learning needs, and their resistance patterns—that’s the real challenge.

And that’s also the real opportunity.

If you’re planning to implement AI in your business, start here:

Before you buy another tool, gather your team and ask them one question: “What’s the most tedious, repetitive part of your job that you wish someone else would handle?”

Their answers will tell you exactly where AI can help. And more importantly, you’ve just started the change management process by involving them in the conversation.

Reply to this post with what you learn, and I’ll help you figure out the next step.

References

“2024 Work Trend Index: AI at Work Is Here. Now Comes the Hard Part,” Microsoft/LinkedIn, 2024. https://www.microsoft.com/en-us/worklab/work-trend-index/ai-at-work-is-here-now-comes-the-hard-part

American Public, Investor and Executive Perspective on Responsible AI Deployment, Just Capital, December 2025

“Prosci’s Latest Research Reveals Significant Challenges and Opportunities in Managing AI Change,” Prosci, 2025

“AI Adoption Trends: What’s Working in 2025,” S&P Global, 2025

“Why 80% of AI Projects Fail,” RAND Corporation, 2024

“The State of AI in Early 2024: Gen AI Adoption Spikes and Starts to Generate Value,” McKinsey & Company, 2024

Venkatesh, V., et al. “User Acceptance of Information Technology: Toward a Unified View,” MIS Quarterly, 2003

“Deloitte TrustID Index: Trust in GenAI Systems,” Deloitte, 2025

“Global Workforce of the Future 2024,” Adecco Group, 2024